Adaptive Gradient Harmonization: Mitigating Modality Dominance in Unified Representation Learning

Jan 23, 2025··

1 min read

Lakshya

Abstract

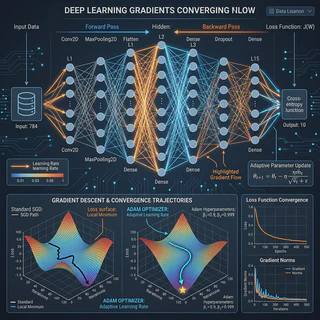

In unified representation learning, distinct modalities often compete for optimization bandwidth, leading to a phenomenon known as ‘Modality Laziness’ or dominance. This paper introduces Adaptive Gradient Harmonization, a novel approach to mitigate this issue. We innovate the Modality Fairness Controller (MFC) to dynamically balance learning rates across Vision and Audio based on their real-time training dynamics. Furthermore, we propose the Overfitting-to-Generalization Ratio (OGR) as a new metric for evaluating multimodal model health. Our experiments on CIFAR-10 and CREMA-D benchmarks demonstrate a 2.3% accuracy boost and a 40% reduction in modality imbalance.

Type

Publication

Under Review

Overview

This research addresses the critical challenge of Modality Dominance in multimodal neural networks. When training on diverse data types (e.g., Audio and Vision), models often prioritize the modality that is “easier” to learn, neglecting the other.

Key Contributions

- Modality Fairness Controller (MFC): A dynamic mechanism that adjusts learning rates in real-time.

- Overfitting-to-Generalization Ratio (OGR): A robustness metric for unified models.

- Benchmark Success: Verified improvements on CIFAR-10 and CREMA-D.

Authors

Lakshya

(he/him)

AI Researcher & Systems Engineer

M.Tech AI & ML student specializing in Multimodal Representation Learning and Advanced Systems Engineering.